EDUC 822

Simon Fraser University – Faculty of Education

Evaluation of Educational Programs

MEd Post-Secondary, VCC Cohort

Professor: Dr. Larry Johnson

Student: Kathryn Truant

July 5, 2020

Introduction:

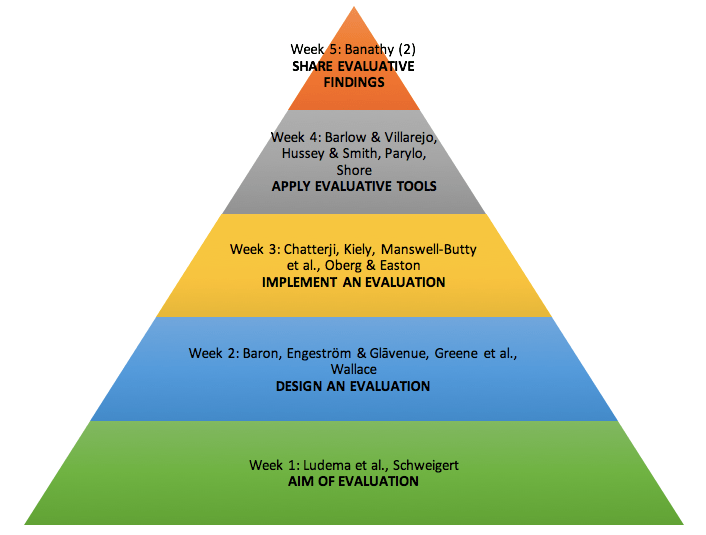

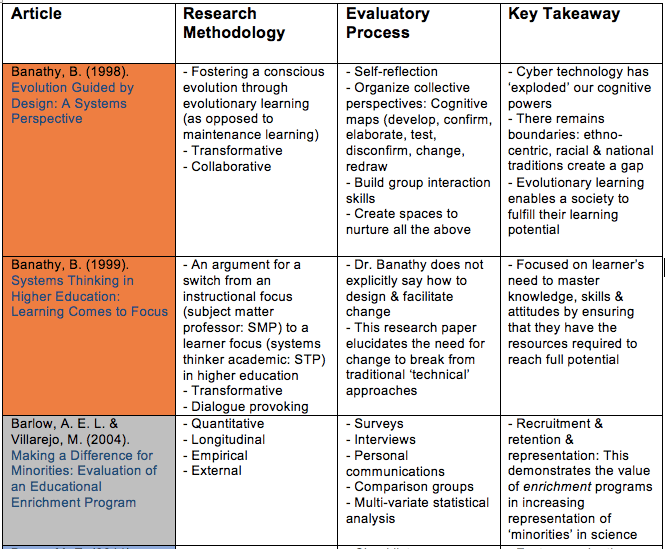

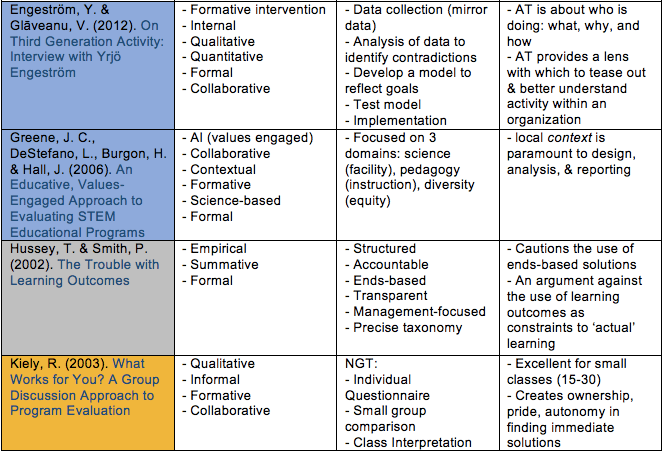

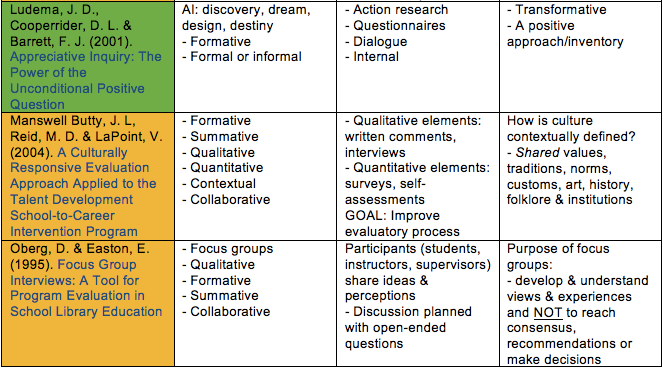

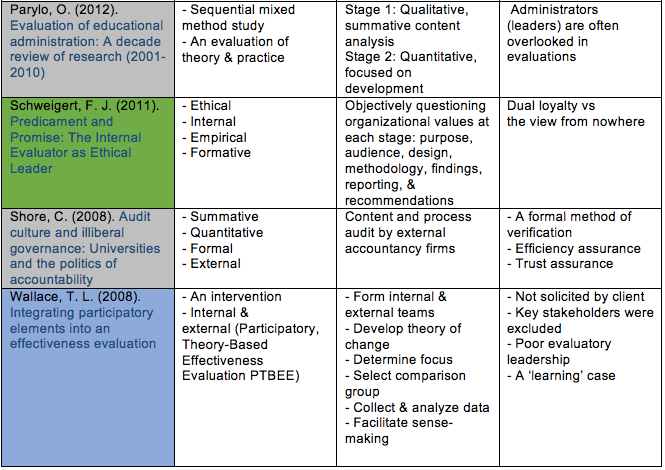

Since the onset of this course, I have been categorizing new ideas, and attempting to make sense of the evaluatory strategies used by the researchers in all the assigned readings. I created a summative chart so that I could cross-reference the various methodologies and processes, paired with my own personal key takeaways; the following is my empirical and theoretical collection from EDUC 822. Please note: The descriptions are purely reflectional, based on class attendance and the assigned readings; they have no experiential merit (i.e. these categorizations make perfect sense to me in the context of my own interpretation).I purposefully omitted Janet Wall’s, Program Evaluation Model 9-step Process (n.d.), and Sanders & Sullins, Evaluating School Programs: An Educator’s Guide (2006) from the list, because (along with the summarized research articles) I will be using these two resources as guides in constructing my case study: the information they contain have too many elements to articulate in my chart format. Further, I elected to omit the ‘skim and scan’ articles from EDUC 822 because I want this guide to be comprehensive, with the brevity, focus, and taxonomy that fosters the scaffolding of new ideas.

Types of Research Methodologies That I Have Encountered

- Formal (a summative evaluation)

- Formative (the focus is on influential findings: can be formal or informal)

- Informal (a formative evaluation based on observations)

- Qualitative (a measurement of quality as in an influential phenomenon)

- Quantitative (a measurement of quantity as in production)

- Summative (the focus is on the outcome: typically, a formal evaluation)

These methodologies can be:

- Action Research (seeks transformative change through research, reflection, and action)

- Appreciative Inquiry (AI: a strengths-based, positive approach which engages stakeholders in self-determined change)

- External (the evaluation design and evaluatory process does not always involve collaboration with stakeholders)

- Internal (the evaluation design and evaluatory process includes collaboration with stakeholders)

- Science-based (utilizing empirical data, statistical analysis, and content)

- Theory-based (projections)

- Any combination of the above (Mixed Methodologies, e.g. PTBEE, ETMM)

Types of Evaluatory Processes That I Have Encountered

- Collaborations

- Comparison groups (multivariate groups selected to compare baseline data)

- Contextual approaches

- Extended-Term Mixed Methods (ETMM)

- Focus groups (small demographically diverse groups formed to garner insight)

- Interventions

- Nominal Group Techniques (NGT)

- Participatory Theory-Based Effectiveness Evaluation (PTBEE)

- Peer reviews, literary reviews, and research papers

- Surveys, questionnaires, and dialogue generating activities

Evaluative Literature Taxonomy

Banathy, B. H. (1998). Evolution guided by design: a systems perspective. Systems Research and Behavioral Science, 15 (3), 161-172.

Banathy, B. H. (1999). Systems thinking in higher education: learning comes to focus. Systems Research and Behavioral Science, 16 (2), 133-145.

Barlow, A. E. L., & Villarejo, M. (2004). Making a difference for minorities: Evaluation of an educational enrichment program. Journal of Research in Science Teaching, 41 (9), 861-881.

Baron, M. E. (2011). Designing internal evaluation for a small organization with limited resources. In B. B. Volkov & M. E. Baron (Eds.), Internal evaluation in the 21st century. New directions for evaluation, 132, 87-89.

Chatterji, M. (2005). Evidence on “what works”: An argument for extended-term mixed-method (ETMM) evaluation designs. Educational Researcher, 34 (5), 14-24.

Engeström, Y., & Glāveanu, V. (2012). On third generation activity theory: Interview with Yrjö Engeström. Europe’s Journal of Psychology, 8 (4), 515-518.

Greene, J. C., DeStefano, L., Burgon, H., & Hall, J. (2006). An educative, values-engaged approach to evaluating STEM educational programs. New Directions for Evaluation, 109, 53-71.

Hussey, T., & Smith, P. (2002). The trouble with learning outcomes. Active learning in higher education, 3 (3), 220-233.

Kiely, R. (2003). What works for you? A group discussion approach to programme evaluation. Studies in Educational Evaluation, 29 (4), 293-314.

Ludema, J. D., Cooperrider, D. L., & Barrett, F. J. (2001). Appreciative inquiry: The power of the unconditional positive question. Graduate School of Business & Public Policy (GSBPP).

Manswell Butty, J. L., Reid, M. D., & LaPoint, V. (2004). A culturally responsive evaluation approach applied to the talent development school-to-career intervention program. New Directions for Evaluation, 101, 37-47.

Oberg, D., & Easton, E. (1995). Focus group interviews: A tool for program evaluation in school library education. Education for Information, 13 (2), 117-129.

Parylo, O. (2012). Evaluation of educational administration: A decade review of research (2001-2010). Studies in Educational Evaluation, 38, 73-83.

Sanders, J. R., Sullins, C. D. (2006). Evaluating school programs: An Educator’s guide (3rd ed.). Thousand Oaks: Corwin Press.

Schweigert, F. J. (2011). Predicament and promise: The internal evaluator as ethical leader. In B. B. Volkov & M. E. Baron (Eds.), Internal evaluation in the 21st century. New Directives for Evaluation, 132, 43-56.

Shore, C. (2008). Audit culture and Illiberal governance: Universities and the politics of accountability. Anthropological Theory, 8 (3), 278-298.

Wall, J. E. (n.d.). Program Evaluation Model 9-Step Process. Retrieved from https://www.janetwall.net/attachments/File/9_Step_Evaluation_Model_Paper.pdf

Wallace, T. L. (2008). Integrating participatory elements into an effectiveness evaluation. Studies in Educational Evaluation, 34 (4), 201-207.

You must be logged in to post a comment.